Invest in AI, Avoid the Bubble - Ep. 4/4

What to avoid

The content of this analysis is for entertainment and informational purposes only and should not be considered financial or investment advice. Please conduct your own thorough research and due diligence before making any investment decisions and consult with a professional if needed.

In this fourth and final episode of Invest in AI, Avoid the Bubble, I’m exploring the remaining companies I’m invested in, explaining why—despite not appearing tied to AI—they actually are AI plays. I’ll also comment on the other categories in the AI Industries and why I don’t have any investments in them.

Let’s start with the latter.

AI-Powered Applications

AI-powered applications are smart software programs tailored for specific tasks, like adding AI to your sales dashboard to predict deals (Salesforce’s Einstein is a classic example). The barrier to entry is low—anyone can tweak existing AI with simple tools. The key to survival? Own unique data from your niche or build a user base that keeps coming back, creating a loop of more data and better service. Without that, it’s fun for a bit, but poof—gone when the hype cools.

Distribution and stickiness are among the best competitive advantages in this category. Think of companies that are gatekeepers for specific use cases, like CAD companies. A few tools dominate entire industries, and loyal users who developed their skills on those specific tools rarely switch. If these companies are flexible enough to upgrade their tools with AI-powered features, they win. These companies can be smart plays, but I’m not invested in them because they won’t deliver the returns I expect for the L2C portfolio. They’re mature businesses that are unlikely to grow aggressively.

Another example in this category is Palantir ($PLTR). Palantir is redefining industries by building a new kind of operating system to run your business, based on AI. It’s one of the essential enablers of the AI revolution. I owned the stock since the inception of the L2C portfolio (Jan 2024) and exited it in 2025. The reason isn’t that I no longer believe in Palantir, but simply that its valuation made it less attractive compared to other stocks in the portfolio. So far, I haven’t found another stock in this category that sparked enough conviction.

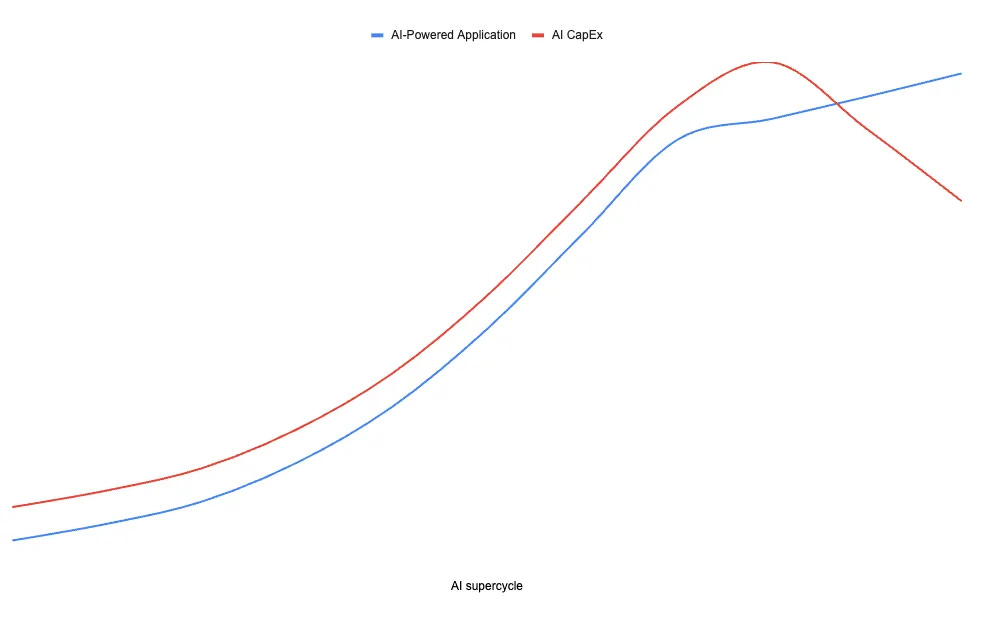

This is the revenue trend I expect from this category, alongside the AI Capital Expenditure trend, assuming demand—and therefore CapEx—lasts long enough.

As you may have noticed, I expect it to be—qualitatively—quite similar to the AI infrastructure cycle, following the AI CapEx curve on the rise and tapering its growth once the hype cools down.

AI-Model

These are the foundational AI models—like large language models (LLMs)—that power chatbots and code assistants. Thanks to the explosion of open and “open‑weight” models, many of these capabilities can now be downloaded, fine‑tuned, or reused by anyone with enough skill. Even NVIDIA has released high‑quality open models under permissive licenses, so teams can adapt them for their own products without starting from scratch.

Because of this, I think pure “model quality” by itself is becoming less defensible over time. Closed models will increasingly keep their edge where the bar is much higher: specialized domains like coding assistants trained on massive proprietary codebases, or biology models tuned on hard scientific and clinical data.

In practice, sustainable moats cluster around three things working together:

Hard verticals: you solve a difficult, narrow problem where data, regulation, or expertise make it hard to copy you.

Distribution: you sit where users already are—inside the main app, operating system, search bar, or workflow they use every day.

Integration: your model is wired into the real workflow and systems of record, not just a chatbox. It sees the right data, triggers actions, updates dashboards, respects permissions, and closes the loop.

Take a CAD vendor as an example. If they build an AI copilot directly into the design tool that engineers already live in 8 hours a day, trained on years of proprietary design data, that assistant is very hard to displace. A slightly “better” generic model living on a separate website is much less attractive, because it has no access to the same data, no tight integration with the workflow, and no default distribution.

The same logic applies to players like Palantir in enterprise operating systems, or to a future “AI operating system” for factories, hospitals, or logistics. The moat is not just the model. It’s the combination of: hard vertical problem, proprietary data, default distribution, and deep integration.

For most everyday tasks—summarizing emails, answering questions, basic reasoning—the gap between leading open and closed models is already small enough that I don’t expect much of a lasting moat at the “generic chatbot” level. That’s why my view is simple: only pursue models if they are laser‑focused on hard vertical problems and you control the distribution channels where users actually show up. That’s where durable value accumulates.

I expect this category to follow a similar revenue trend alongside AI Capital Expenditure, assuming demand—and CapEx—lasts long enough.

AI-Silicon

Silicon is the physical layer of AI: the chips that make everything else possible, like Nvidia’s GPUs crunching massive amounts of data. This is where the system hits real-world limits. Advanced manufacturing capacity is tight, lead times are long, and every new fab costs tens of billions to build. You can’t “move fast and break things” when it takes years and huge capital just to add more supply.

On its own, though, even the best chip is not enough. The real moat shows up when you pair the hardware with a sticky software layer and tight integration into AI workloads. That’s when customers stop thinking in terms of a “GPU” and start thinking in terms of a platform they can’t live without.

Nvidia is the clearest example. CUDA and the broader Nvidia software stack have become the default environment for AI developers. Most frameworks, tools, and libraries are optimized first for Nvidia, which means teams naturally build and tune their systems around Nvidia GPUs. Moving away is possible in theory, but in practice it means rewriting code, retraining models, and rebuilding a lot of internal tooling. That’s a powerful kind of lock‑in.

The same logic applies at the cloud level. Hyperscalers like AWS, Azure, and others sign huge multi‑year deals to deploy Nvidia systems at scale and expose them as managed services. These agreements channel tens of billions of dollars of AI demand into a small number of tightly integrated hardware‑plus‑software platforms. If your workloads are built on those stacks, with their specific drivers, compilers, and orchestration tools, switching is painful.

So the lesson from AI-Silicon is simple:

Chips alone are a good business.

Chips plus a deep software ecosystem and distribution through the big clouds can become a fortress.

Without that software “glue” and distribution, even the most advanced GPU risks becoming just another commodity part in a crowded supply chain.

This is the revenue trend I expect from this category, alongside the AI Capital Expenditure trend.

Like the graphs and my analysis? Check out my “Tools I Trust & Use” page to see what I use—and grab ‘em via my links to support my work!

TIKR is my go-to tool for all my stock research—it’s a game-changer for spotting winners before the crowd. For you, my Lorenzo2Cents readers: Use the Coupon Code lorenzo20 for 20% off, live now through February 6. Don’t miss out—grab it.

AI-Power

Finally, power—the electricity fueling it all. AI infrastructure is extremely energy-intensive, and datacenter operators are already competing for grid capacity and new generation facilities. At first glance, power looks like a pure commodity with no edge—everyone buys electrons from the same market, right?

Not quite. While electricity itself is fungible, strategic advantages do exist: locking in cheap power through long-term purchase agreements, building near abundant generation sources (hydro, nuclear, natural gas), or even owning generation assets outright. Companies that secure favorable energy access early can maintain cost advantages that persist even when spot prices swing. However, these moats are tied to location, capital, and contracts—not proprietary technology or network effects.

For the L2C portfolio, I see power as table stakes, not a high-conviction opportunity. It’s critical infrastructure, but the returns and defensibility don’t match what I’m targeting with my other AI plays. So while energy strategy matters for hyperscalers and neocloud providers (like Nebius securing datacenter power), I’m not investing directly in power generation or utilities.

This is the revenue trend I expect from this category, alongside the AI Capital Expenditure trend.

My other picks

Rocket Lab ($RKLB)

The leading public space company focused on small-to-medium launch vehicles and space systems. Rocket Lab is building an end-to-end space company, aiming to compete with SpaceX. So far, it has successfully scaled a small launch vehicle (Electron) from the ground up, and is now preparing to enter the medium-lift market in 2026 with its Neutron rocket, targeting a segment currently dominated by SpaceX’s Falcon 9. The company’s competitive edge comes from vertical integration—manufacturing nearly all components in-house—and exceptional leadership under founder Peter Beck.

Why is this an AI play? Space infrastructure is becoming critical to AI’s future. AI models need massive amounts of data, and much of that data will come from space: Earth observation satellites for climate modeling, agricultural optimization, disaster response, and autonomous systems. Additionally, as AI compute demands grow, there’s serious discussion about space-based datacenters to access abundant solar power and natural cooling. Rocket Lab provides the launch services and satellite manufacturing capabilities to make this infrastructure possible. As AI drives demand for more satellites and space-based services, Rocket Lab is positioned to benefit directly from that secular growth trend.

I expect these characteristics will make Rocket Lab compound for the foreseeable future. $RKLB is already a multibagger for me (about 10x).

Check https://www.lorenzo2cents.com/p/rocket-lab-articles to know more and subscribe to Lorenzo2cents (L2C) Premium to access the details of the allocation, my strategies, and much more.

Mercado Libre ($MELI)

The backbone of Latin America’s digital economy, poised to benefit from continent-level network effect: a full‑stack “Amazon e‑commerce + PayPal fintech” platform using its scale, logistics, and data to build an unbeatable commerce moat that feeds a sticky, high‑margin fintech ecosystem across payments, credit, and financial services.

Why is this an AI play?

Mercado Libre is quietly becoming an AI infrastructure layer for LatAm’s consumer economy. It uses AI to optimize logistics and delivery routes, fight fraud, score credit risk, and personalize recommendations across commerce and fintech. On top of that, it is rolling out AI‑generated ads so even small sellers get high‑performing creatives, and building AI‑powered internal systems to drive real‑time decisions at scale.

The result: MELI doesn’t just benefit from AI demand—it is turning its data, distribution, and infrastructure into an AI‑native rails system for commerce and finance in Latin America.

I expect these characteristics will make Mercado Libre compound for the foreseeable future.

Check https://www.lorenzo2cents.com/p/mercado-libre-articles to know more and subscribe to Lorenzo2cents (L2C) Premium to access the details of the allocation, my strategies, and much more.

Lorenzo2cents Portfolio strategy for 2026

The current L2C Portfolio allocation to $RKLB is about three times the optimal allocation suggested by the “Allocation_Optimizer” Tool, while the allocation to $MELI is about half of what’s recommended.

If you’re unfamiliar with this, please review https://www.lorenzo2cents.com/p/l2c-lesson-2-mastering-portfolio.